Four months on: is Roblox any safer?

05/09/2025

In April, we published a deep dive investigation into Roblox after growing concerns about what children were experiencing on a platform used daily by millions of young people.

It uncovered some serious problems: adults and children could interact freely in virtual spaces with minimal safeguards, and inappropriate content – often overtly sexual – was easily accessible to young users.

During the course of that investigation, Roblox began rolling out new parental control tools for users under 13. While these represented a step in the right direction, they didn't go nearly far enough. Adults could still easily interact with children in the same virtual experiences with no effective age verification or separation. Our account registered as a 10-year-old was able to access highly suggestive environments featuring private bedrooms and avatars in BDSM-style outfits – and these concerning spaces were not difficult to find.

Since then, Roblox has announced a series of significant updates designed to better protect children on the platform. These changes represent the company's most comprehensive response yet to growing safety concerns from parents, researchers, and policymakers.

This is our updated Parents' Guide to Roblox. We've returned to the platform to explore these new changes and assess their impact on children's safety. Where possible, we've tested the new features using the same methodology that exposed the platform's vulnerabilities four months ago.

Significant adjustments to content maturity ratings for experiences across the platform

Users must be ID verified as 17+ to access certain games and experiences

Stricter content moderation and improved filtering systems

Enhanced parental controls giving parents greater oversight of their children's accounts and activities

Additionally, there are plans to extend age verification measures to all users

The updates Roblox have implemented include:

When we returned to the platform, some improvements appeared to be immediately visible, based on what we could observe during our testing.

Experiences with overtly inappropriate themes that our child-registered researchers had stumbled upon almost instantly in April were now considerably harder to find and appeared blocked for users under 17. The "Boys and Girls Club Roleplay" experience that our 10-year-old avatar easily accessed previously seems to have been removed, and “Vibe Place”, a dimly lit hotel-like environment, was no longer available to accounts registered as under 17.

Chat moderation also seemed to show a marked improvement, with stricter filtering of potentially harmful communications.

However, notable problems persist.

The most serious concern remains unchanged: while ID verification appears to be a robust gatekeeper for keeping children away from restricted experiences, it’s still remarkably easy to create an account with a false age. Adults can continue to create accounts pretending to be children.

And while Roblox appears to be successfully tackling experiences with overtly inappropriate themes – like the sexually suggestive hotel environments we documented in April – more innocuous-seeming experiences such as “Mic Up” or “Therapy sessions” still pose problems for child safety.

In these outwardly benign social spaces, 13-year-olds with phone-verified accounts can still chat directly with adults using voice communication. More concerning still, these environments offer plenty of open but secluded corners of virtual maps where users can pull someone aside for what amounts to a private voice conversation, away from other players.

Roblox may have cleaned up its most obvious problems, but without meaningful separation between adult and child users, serious risks remain.

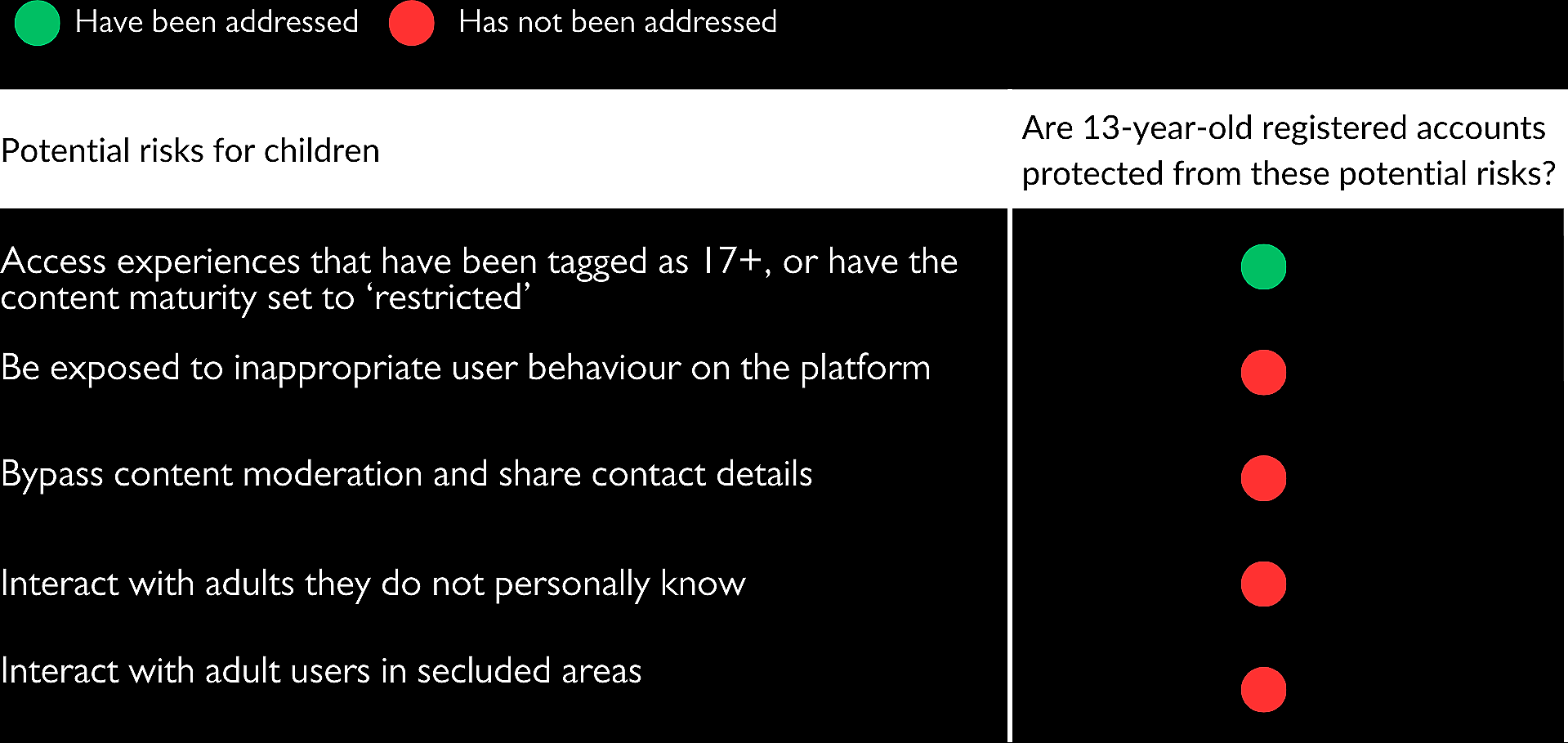

What risks have been addressed?

What we found: the detail

To understand how the updates implemented by Roblox affect children in practice, we returned to the platform and tested it from a user perspective. As before, we created a range of accounts registered at different ages, including children and adults, to explore what each could see and do.

Our focus was on what happens in real time during game play – what children encounter, who they can interact with, and how safety features function day to day – rather than on parental dashboards, notifications or screen-time settings.

Our aim was to replicate the experience of a child using Roblox, rather than relying only on Roblox’s policy statements or technical announcements.

Avatar testing methodology

To test and explore Roblox’s new features, we created three accounts: a 5-year-old, a 13-year-old, and an 18-year-old.

We started by testing features with non-verified accounts, then gradually increased the level of verification for the 13-year-old and 18-year-old accounts, first with phone verification, and then with ID verification for the 18-year-old account.

We explored a variety of experiences to understand what the different avatars could see and do. As with our first investigation, we observed other users - following some and recording how they interacted with each other as well as with our avatars. We did not initiate interactions with users to ensure we did not influence their behaviours in any way. We did interact with other avatars created by our research team.

We had an initial scoping phase on the August 4th, using a 13-year-old account and an existing 42-year-old age-verified avatar account previously used in our last round of testing.

Tests were carried out on 4th August and between 25th August and 5th September 2025.

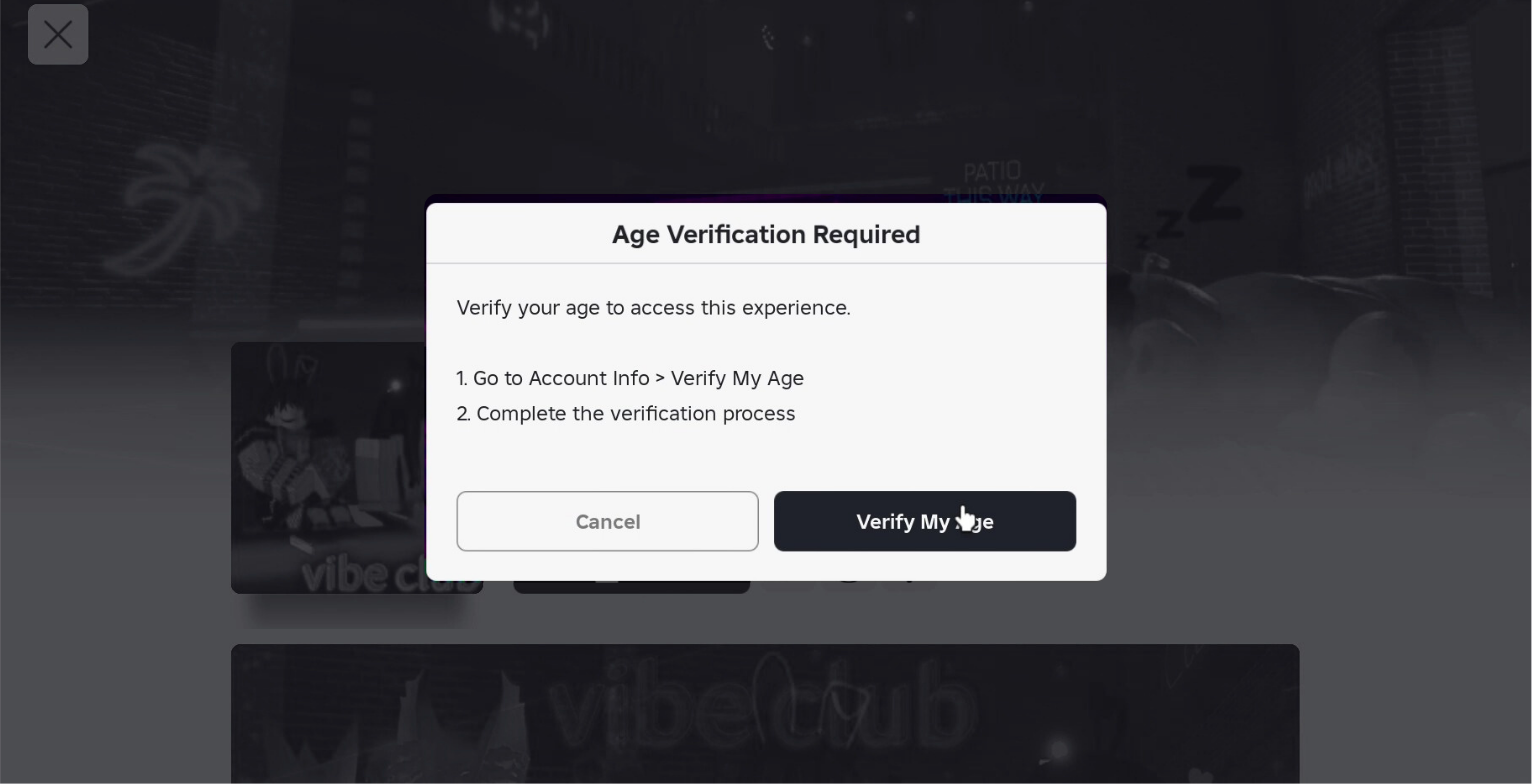

Roblox has implemented new features to enhance age verification and restrict access to certain content. The use of age-estimation technologies, and ID verification means that only verified 17+ users can access experiences designed for adults.

On 03/09/25, Roblox has announced it will be introducing age verification for all users by the end of the year.

What does this mean for users?

Users who wish to verify their accounts as 17+ must provide an official ID, or go through an age estimation process

Roblox is tagging experiences with overtly inappropriate themes as 17+. Only age-verified 17+ users can enter these places. These experiences are closed off to under 17s.

Games and social experiences with adult themes that were previously accessible, such as "Vibe Place," are now tagged as 17+ and are restricted. While our child avatars were able to access these experiences in our last investigation, they were unable to do so this time.

Age verification and age-restricted experiences

Age verification required to access 17+ rated experiences.

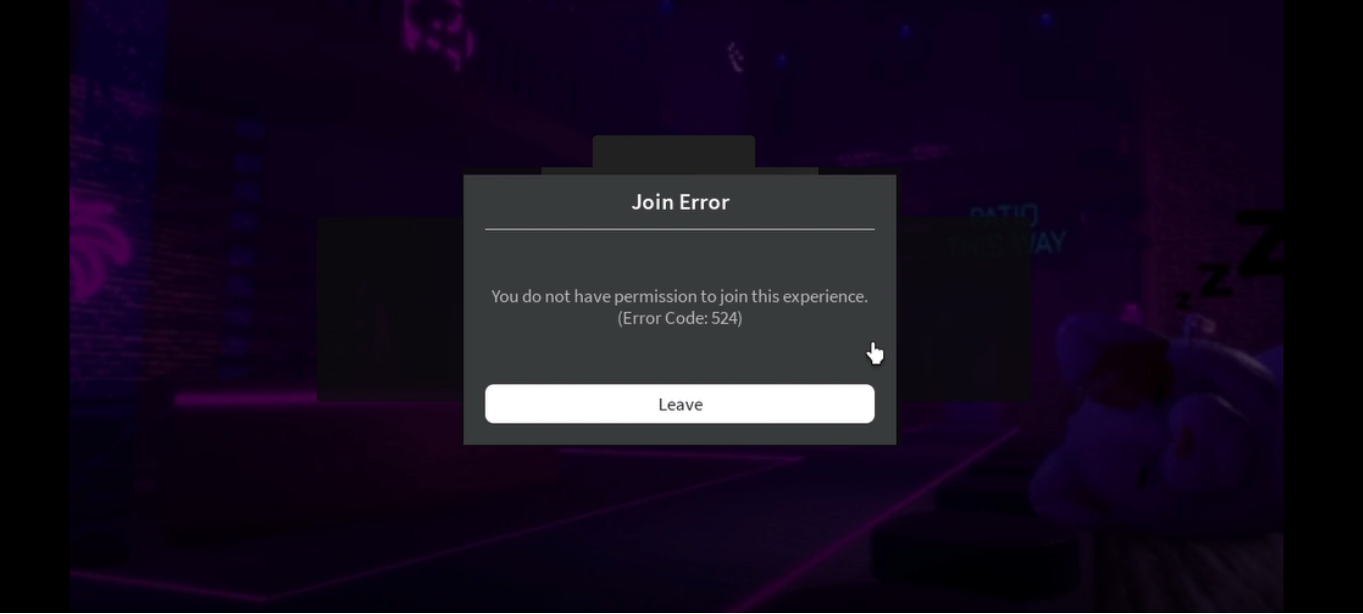

Error code preventing unverified and under-age users from accessing 17+ experiences, even if they are invited by a verified 17+ user.

What risks still exist?

While Roblox is taking steps to prevent under 17s from entering adult spaces, adults are still able to enter children’s spaces, and can play, chat and speak with phone verified users registered as 13+.

It is still easy to create a non-verified account with a false age, which means an adult can create an account pretending to be a child.

Users registered as 13+ can still voice-chat with other users, children or adults, on social spaces, so long as they verify their account with a phone number.

There is nothing stopping under 13s, or adults from creating an account as a 13+ child and speak with other users, adults or children, as long as they add a phone number on their account.

On some experiences, adult users are flagged with an 18+ label above their heads. But many spaces allow adults to go unnoticed among child-registered users.

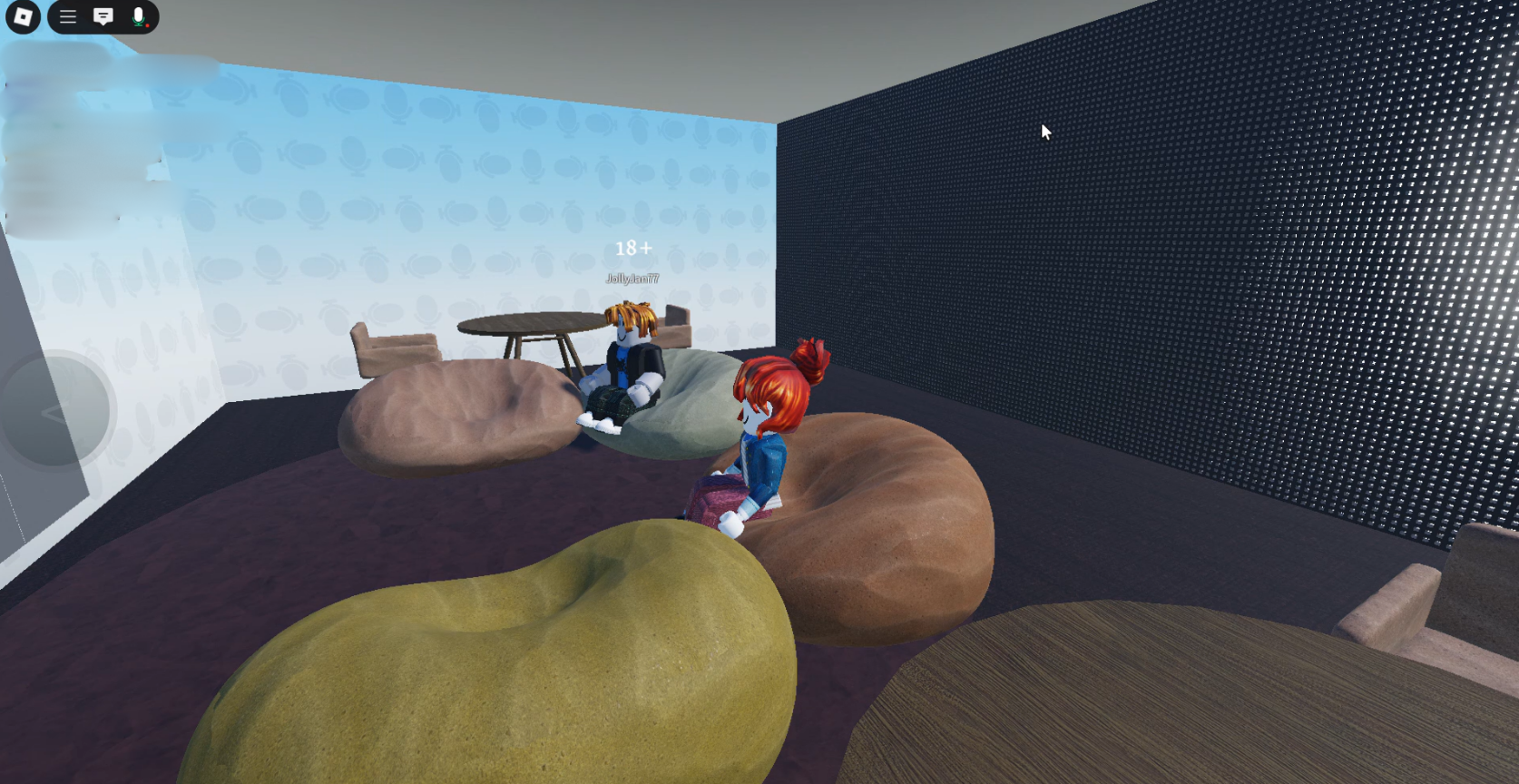

Our adult, age-verified avatar being flagged as 18+ on “Mic Up”

Our adult, age-verified avatar not being flagged as 17+ on “Therapy”

Roblox has launched Trusted Connections, a new feature that allows users to chat more freely with people they know and trust. Users now need to verify their age to access unfiltered chats. Users between 13 and 17 who pass the age check can add other users in the same age range to their Trusted Connections. Users 18 or older can add teens (13-17) to their Trusted Connections only through in-person QR code scans or phone numbers. This is designed to ensure these connections are based on real-life relationships.

To prevent potentially inappropriate interactions, Roblox has also restricted private, virtual spaces like bedrooms or clubs.

What does this mean for users?

Within a Trusted Connection, users can privately voice-chat and use unfiltered text chat.

Games with suggestive themes, such as those with locked bedrooms, are not accessible to users under 17.

In more “neutral” themed spaces, it appears users are no longer able to lock doors and trap others in a room with them, which was previously possible.

(Inappropriate) interactions with strangers

What risks still exist?

Inappropriate interactions between adult and child accounts can still occur outside of Trusted Connections.

On-platform private messaging is still permitted between accounts registered over 18 and those over 13, regardless of whether they know each other in real life.

In-game public voice chat is still permitted between accounts registered over 18 and those over 13, regardless of whether they know each other in real life.

It still possible to circumvent chat restrictions. Our adult avatar was able to ask for, and receive, our child avatar's Snapchat details by using the word "pans" (snap backwards) or using different fonts.

Filters and moderation on in-game voice chat do not seem to be fully effective, as our adult and child avatars were easily able to exchange contact details.

Our adult-verified avatar chatting with our child avatar with a different font to bypass chat moderation.

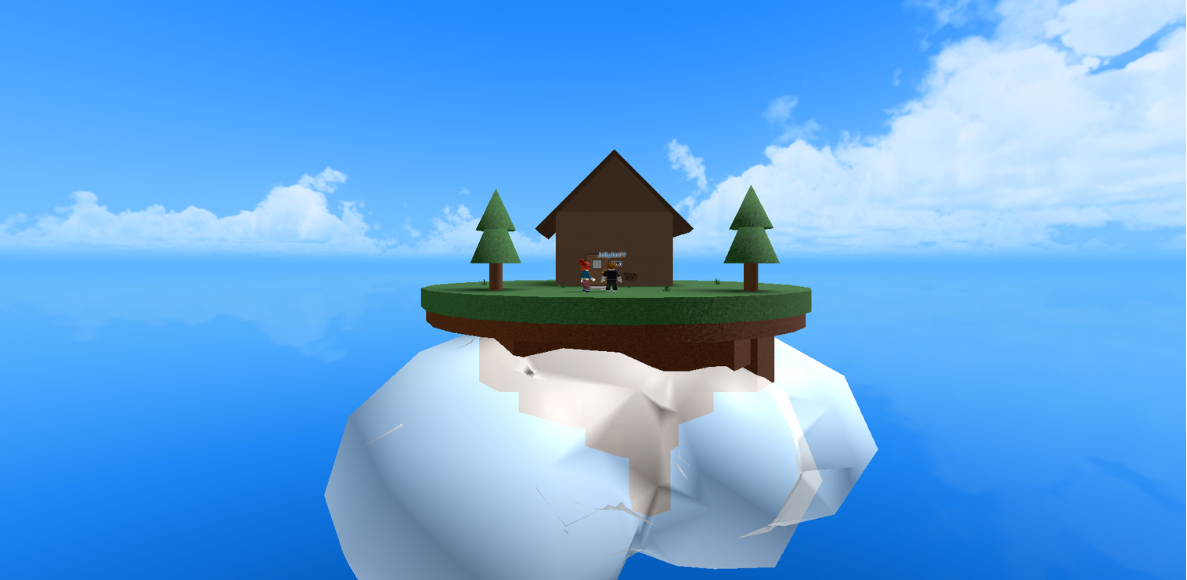

Our 18-year-old avatar and our 13-year old avatar on a private island on "Therapy", sharing snapchat details with each other over voice chat.

Roblox appears to be tackling experiences with overtly inappropriate themes, but many 13+ spaces still have secluded corners where adult and child users can have near-private conversations.

For example, our adult and child avatars were able to go to a secluded, private island in the "Therapy" experience, where they could voice chat freely and exchange social media details.

Similarly, on "Mic Up," our avatars found semi-hidden rooms where they could have private conversations.

Private island on “Therapy” accessible to child and adult registered users.

Roblox has implemented new technology to automatically detect and shut down servers with a high volume of inappropriate user behaviour. This is meant to apply even to experiences that are otherwise compliant with policies. As before, the platform also uses filters on text and voice chat to prevent inappropriate content.

What does this mean for users?

Users should be less likely to access servers with a high volume of inappropriate user behaviour.

Chat moderation

What risks still exist?

Despite these measures, loopholes from our previous testing still exist.

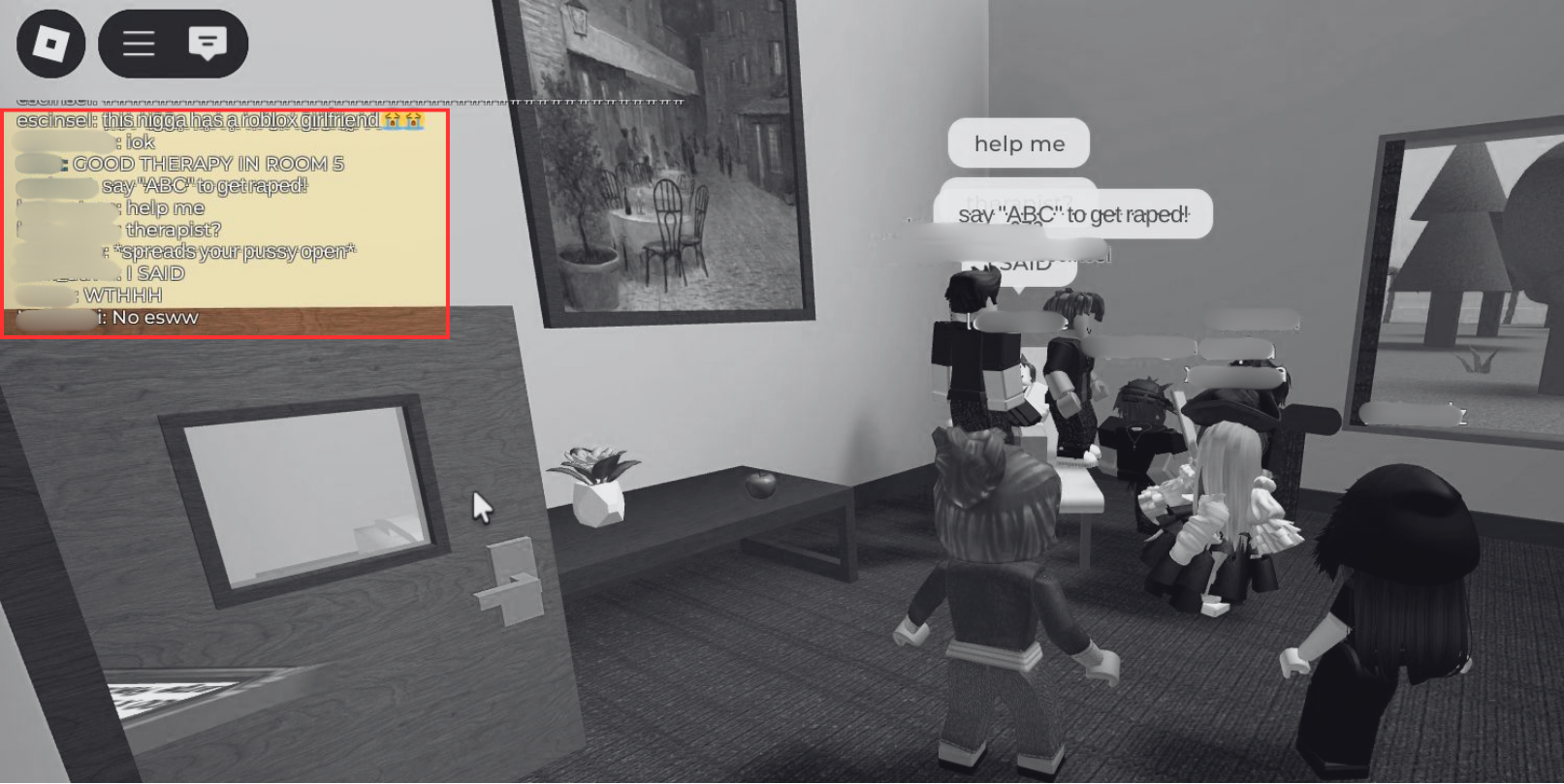

Our child avatar was still able to experience inappropriate behaviour on the chat, and over audio. This included racial slurs, sexual acts, swear words.

Our avatars were also able to bypass voice chat moderation and easily exchange social media details.

Inappropriate user behaviour in-chat on “Therapy” (taken from August 4)